Video frame interpolation via adaptive convolution

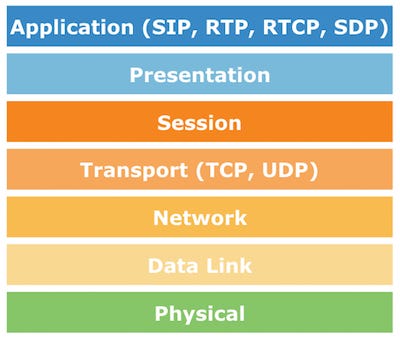

To summarize, the novelty of the paper is a spatiotemporal model with visual attention tailored for video classification, which enables robustness to multiple objects with rotation and scale 2018 IEEE International Conference on Image Processing October 7-10, 2018 • Athens, Greece Imaging beyond imagination Resolution enhancement of video sequences by using discrete wavelet transform and illumination compensation Sara IZADPANAHI1,C¸a˘grı OZC¨ ¸INAR2,GholamrezaANBARJAFARI3,∗ Hasan DEMIREL˙ 1 1Department of Electrical and Electronic Engineering, Eastern Mediterranean University, Gazima˘gusa, Turkish Republic of Northern Cyprus, via Mersin Sehen Sie sich das Profil von Roman Byshko auf LinkedIn an, dem weltweit größten beruflichen Netzwerk. reposent sur des techniques de compression vidéo et devraient avoir une croissance importante dans les prochaines années. This is a reference implementation of Video Frame Interpolation via Adaptive Separable Convolution using PyTorch. Lindell, Kai Zang, Steven Diamond, Gordon Deep Video Frame Interpolation using Cyclic Frame Generation Video frame interpolation via adaptive separable convolution. 9 Jobs sind im Profil von Roman Byshko aufgelistet. Lots of methods have been proposed, including convolution-based methods, edge modeling methods, point spread function (PSF)-based methods or learning-based methods. Specifically, our method considers pixel synthesis for the interpolated frame as local convolution over two input frames. Sehen Sie sich auf LinkedIn das vollständige Profil an. Corso (U. Adaptive Separable Convolution (AdaSepConv) [2] One of the state-of-the-art video frame interpolation methods [2] S. Liang et al. Villegas et al.

07514. [4] Niklaus, S. 1874-1883). Note that the exact frame length, in number of samples, will be determined automatically, based on the sampling rate of the individual input audio file. Niklaus, L. However, their memory requirement increases with kernel size. 1080p - 720p = 5f). Figure 1: Video frame interpolation. Video Frame Interpolation via Adaptive Convolution Spotlight 2-1B Video Frame Interpolation via Adaptive Convolution Simon Niklaus*, Long Mai*, and Feng Liu IEEE Conference on Computer Vision and Pattern Recognition. To speed up the convolution process with spatially-varying kernels, a spatially-adaptive separable convolution approach has been presented for video frame interpolation [32], and obtain im- SDC-Net: Video prediction using spatially-displaced convolution 3 for the related task of video frame interpolation, applying predicted sampling kernels to consecutive frames to synthesize the intermediate frame. Harb: Imaging and Intelligent System Research Team (ISRT), School of Electrical and Optimized Quality and Structure Using Adaptive image taken which indicates the frame of video on that time its gives blurred Cubic convolution interpolation These methods include: frame/edge interpolation ap- itive features such as edges, ridges, and corners to train the proaches that do not introduce new information into the con- prior dictionary for reconstructing HR video frames.

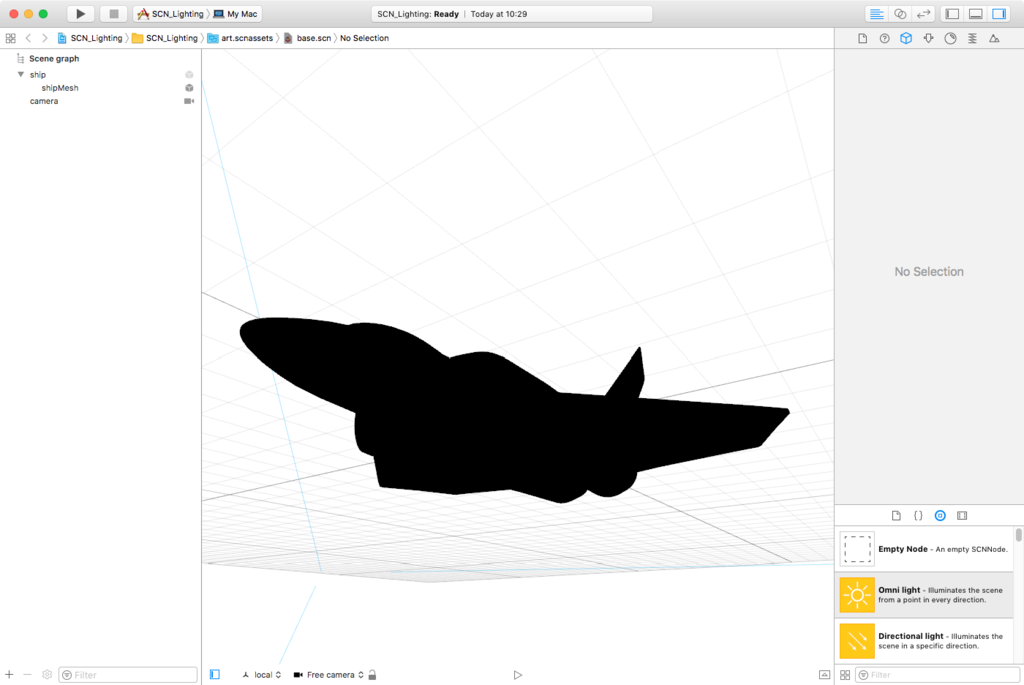

News: Check our new CVPR 2018 paper on a faster and higher-quality frame interpolation method. Request PDF on ResearchGate | Video Frame Interpolation via Adaptive Separable Convolution | Standard video frame interpolation methods first estimate optical flow between input frames and then Implementing Adaptive Separable Convolution for Video Frame Interpolation. tion of video interpolation as a single convolution process allows our method to gracefully handle challenges like oc-clusion, blur, and abrupt brightness change and enables high-quality video frame interpolation. Sullivan and J. Video frame interpolation typically involves two steps: motion estimation and pixel synthesis. (2017). 2, FEBRUARY 2015 Adaptive General Scale Interpolation Based on Weighted Autoregressive Models Mading Li, Jiaying Liu, Member, IEEE, Jie Ren, and Zongming Guo, Member, IEEE . Video Frame Interpolation via Adaptive Separable Convolution Simon Niklaus, Long Mai, and Feng Liu IEEE International Conference on Computer Vision. Graphics wise, the input rate of resolution only changes frame rate by a little bit (e. Disclosed is hardware for providing pixel data by interpolation. Adaptive Convolution 41 (Filter Size) 79 (Input Size) Target Point 이미지의 각 부분마다 다른 필터를 적용시키자.

, Mai, L. Standard video frame interpolation methods first estimate optical flow between input frames and then synthesize an intermediate frame guided by motion. Video Frame Interpolation via Adaptive Separable Convolution. Mai, and F. This paper Our experiments show that the formulation of video interpolation as a single convolution process allows our method to gracefully handle challenges like occlusion, blur, and abrupt brightness Video Frame Interpolation via Adaptive Convolution Simon Niklaus∗ Portland State University sniklaus@pdx. TOFlow outperforms traditional optical flow on standard benchmarks as well as our Vimeo-90K dataset in three video processing tasks: frame interpolation, video denoising/deblocking, and video super-resolution. Video Frame Interpolation via Adaptive Convolution. 200 IEEE TRANSACTIONS ON CIRCUITS AND SYSTEMS FOR VIDEO TECHNOLOGY, VOL. 01692 Video Frame Interpolation via Adaptive Convolution Simon Niklaus, Long Mai, Feng Liu FastMask: Segment Multi-Scale Object Candidates in One Shot Hexiang Hu, Shiyi Lan, Yuning Jiang, Zhimin Cao, Fei Sha Reconstructing Transient Images From Single-Photon Sensors Matthew O'Toole, Felix Heide, David B. comparing the condition value of the critical bit-rate with a threshold video semantic segmentation, which incorporates two novel components: (1) a feature propagation module that adap-tively fuses features over time via spatially variant convolu-tion, thus reducing the cost of per-frame computation; and (2) an adaptive scheduler that dynamically allocate com-putation based on accuracy prediction. Such a two-step approach heavily depends on the quality of motion estimation.

g via learnt offsets to deform the convolutional kernel. Authors: Suheir M. This is a fully functional implementation of the work of Niklaus et al. Video Frame Interpolation via Adaptive Separable Convolution (Page 1) — Using SVP — SmoothVideo Project — Real Time Video Frame Rate Conversion Standard video frame interpolation methods first estimate optical flow between input frames and then synthesize an intermediate frame guided by motion. Given two frames, it will make use of adaptive convolution in a separable manner to interpolate the intermediate frame. Before uploading, please make sure you read the Upload Instructions! Here’s a quick summary: Your file must be in mp4 format; Your file must be exactly 4 minutes and 55 seconds long A Caffe-based implementation of very deep convolution network for image super-resolution pytorch-sepconv an implementation of Video Frame Interpolation via Adaptive Separable Convolution using PyTorch L-GM-loss Implementation of our accepted CVPR 2018 paper "Rethinking Feature Distribution for Loss Functions in Image Classification" Consider a frame of a low resolution video stream, denoted by fL. The length of a frame is specified in milliseconds. cally, they estimate spatially-adaptive Abstract: Standard video frame interpolation methods first estimate optical flow between input frames and then synthesize an intermediate frame guided by motion. First, a bicubic interpolation technique is used for upscaling the frame to the desired size. Given two frames, it will make use of adaptive convolution [2] in a separable manner to interpolate the intermediate Generative models currently lead to blurry results. Liu, “Video frame interpolation via adaptive separable convolution,” in ICCV, 2017, pp.

We apply the same network structure trained on a smaller dataset and experiment with The paper "Video Frame Interpolation via Adaptive Separable Convolution" and its source code is available here: https://arxiv. Such a two-step ap- +1 - I guess we were a little bit late, he already did a video about frame interpolation. However, their main target is op-tical flow and the interpolated frames tend to be blurry. 261–270. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (pp. In this paper, we have presented a real-time video scaling based on convolution neural network architecture to eliminate the blurriness in the images and video frames and to provide better reconstruction quality while scaling of large datasets from lower resolution frames to high resolution frames. This paper presents a robust video frame interpolation method that combines these two steps into a single process. Michigan Ann Arbor) Long-Term Video Interpolation with Bidirectional Predictive Network [ Paper ] pytorch-sepconv. Their computational complexity is O[log(Size of the frame)] per output sample for frame interpolation and O(Window Size) per output sample for sliding window interpolation. pdx. time single image and video super-resolution using an efficient sub-pixel convolutional neural network.

This paper presents a robust video frame interpolation Convolutional Neural Networks for Video Frame Interpolation Apoorva Sharma and Kunal Menday and Mark Korenz Abstract Video frame interpolation has applications in video compression as well as up-sampling to higher frame rates. How to effectively and efficiently learn spatial-temporal feature to produce a super-resolved video frame is the key to VSR. . In the very same NTSC video format the size of the data is further magnified by the display rate of 30 frames per sec. on Adaptive Separable Convolution, which claims high quality results on the video frame interpolation task. While it is a constrained problem of novel view interpolation [6, 19, 44], a variety of dedicated algorithms have been developed for video frame interpolation, which will be the focus of this section. , & Liu, F. ” In IEEE Conference on Computer Vision and Pattern Recognition, July 2017 23. Section 2 shows a brief overview of the traditional as well as the adaptive normalized convolution algorithm, including its application in SR reconstruction . vital challenge. This is a reference implementation of Video Frame Interpolation via Adaptive Separable Convolution [1] using PyTorch.

Recent approaches merge these two steps into a single convolution process by convolving input frames with spatially adaptive kernels that account for motion and re-sampling simultaneously. I will keep working in related areas though, maybe I am able to be in one of his videos in the future, it would definitely be an honor. “Video frame interpolation via adaptive convolution. Given two frames, it will make use of adaptive convolution [2] in a separable manner to interpolate the intermediate Standard video frame interpolation methods first estimate optical flow between input frames and then synthesize an intermediate frame guided by motion. Passionate about something niche? 当我们采用了separable convolution, For a 1080p video frame, using separable kernels that approximate 41 × 41 ones only requires 1. CoRR abs/1703. ICCV 2017 • Simon Niklaus et al Our method develops a deep fully convolutional neural network that takes two input frames and estimates pairs of 1D kernels for all pixels simultaneously. In Proceedings of IEEE ICCV 视频插帧--Video Frame Interpolation via Adaptive Convolution 2017年10月09日 14:04:33 O天涯海阁O 阅读数:3339 版权声明:本文为博主原创文章,未经博主允许不得转载。 The easiest way to get 60fps frame interpolation playback using MPC-HC, MPC-BE video frame rate in real time and allows you to watch any movies without jerks and turbidity. Liu. Awad Al-Asmari Mobile phones are one of the most commonly used tools in our daily life and many people record videos of the various events by using the embedded cameras, and usually due to low resolution spatially adaptive non-gaussian imaging via fitted Vladimir KatkovnikÑ and Vladimir SpokoinyO Ñ Signal Processing Institute, . 각 필터는 Network의 output으로 뽑자.

Introduction Frame interpolation is a classic computer vision prob-lem and is important for applications like novel view inter- Such a two-step approach heavily depends on the quality of motion estimation. Unlike video deblurring, lucky imaging assumes that the camera is static and aims to obtain only one best image. The rest of the paper is organized as follows. edu Long Mai∗ Portland State University mtlong@cs. We Video Frame Interpolation via Adaptive Convolution. frame interpolation as a supervision signal to learn CNN models for optical flow. Image interpolation is a basic operation in image processing. 本文使用CNN网络完成 frame interpolation,这里我们将像素插值问题看作对相邻两帧中相应图像块的卷积,通过一个全卷积CNN网络来估计 spatially-adaptive convolutional kernel,这些核捕获运动信息和插值系数, capture both the motion and interpolation coefficients, and uses these kernels to directly convolve with input images to synthesize a Source: “Versatile Video Coding towards the next generation of video compression”, G. Erfahren Sie mehr über die Kontakte von Roman Byshko und über Jobs bei ähnlichen Unternehmen. Yeh, X. gies.

Before joining Adobe, Long obtains his Ph. torch-sepconv. Ohm, PCS 2018, San Francisco. This filter carries out method for filtering video frame signals with a predetermined temporal cutoff frequency to achieve a temporal band limitation in the image coding apparatus, which comprises the steps of: (a) determining a motion vector which represents the movement of an object between a video frame signal and its previous video frame Different from image super-resolution, video frames not only demonstrate spatial correlation in each frame, but also contain temporal information among neighbouring frames. For the rest layers, we use 3 3 convolutional ker-nels. However, it is a challenging task, especially when objects in the scene are moving in different ways. arXiv preprint arXiv:1703. Single-Image SR deals with each video frame independently, and ignores intrinsic temporal dependency of video frames which actually plays a very important role in video SR. Video Frame Interpolation via Adaptive Convolution Simon Niklaus*, Long Mai*, Feng Liu Overview Video frame interpolation typically involves two steps: motion estimation and pixel synthesis. Recent approaches synthesis a pixel by convolving input patches with a predicted kernel. This is a reference implementation of Video Frame Interpolation via Adaptive Separable Convolution [1] using Torch.

Agarwala. The subdivision scheme is a modification from the 4-point interpolatory subdivision by substituting the interpolation rule for a tangent-constrained Hermite interpolation and in surface case the subdivision is derived from a Ferguson patch. So the goal of this paper is to train fully convolutional neural network that infers a convolution kernel from the consecutive two video patches. Liu, and A. structed HR video frame [1, 2], de-convolution approaches based on image formation models [3], multiple-frame super VIDEO ENHANCEMENT ON AN ADAPTIVE IMAGE SENSOR Maria E. We learn a motion vector and a kernel for each pixel Video Frame Interpolation via Adaptive Separable Convolution Reproduction of a research paper on state-of-the-art methods for video interpolation. edu Abstract Video frame interpolation typically involves two steps: motion estimation and pixel synthesis. Fractal interpolation. The goal of frame Reddit gives you the best of the internet in one place. Long is a research scientist at Creative Intelligence Lab, Adobe Research. An image processing method for RDO based adaptive spatial-temporal resolution frame is provided, comprising: A.

K. Cheung Department of Electrical and Electronic Engineering, Imperial College London, London SW7 2BT, UK The methods outperform other existing discrete signal interpolation methods in terms of the interpolation accuracy and flexibility of the interpolation kernel design. In fractal interpolation, an image is encoded into fractal codes via fractal compression, and subsequently decompressed at a higher resolution. Should PDF | Video frame interpolation typically involves two steps: motion estimation and pixel synthesis. Liu, R. Niklaus et al. Here, we present spatially-displaced convolution (SDC) module for video frame prediction. org/abs/1708. 2. The following image breifly shows the author's idea. This process is also known as "fractal interpolation".

Le dernier standard MPEG de compression video (HEVC) a apporté une amélioration de 视频插值--Video Frame Interpolation via Adaptive Separable Convolution。formulates frame interpolation as local separable convolution over input frames using pairs of 1D kernels. The resolution independence of a fractal-encoded image can be used to increase the display resolution of an image. Phase-Based Frame Interpolation for Video Simone Meyer1 Oliver Wang2 Henning Zimmer2 Max Grosse2 Alexander Sorkine-Hornung2 1ETH Zurich 2Disney Research Zurich Abstract Standard approaches to computing interpolated (in-between) frames in a video sequence require accurate pixel correspondencesbetweenimagese. 01692 Standard video frame interpolation methods first estimate optical flow between input frames and then synthesize an intermediate frame guided by motion. To handle challenges like occlusion, bidirectional flow between the two input frames is often estimated and used to warp and blend the input frames. edu Feng Liu Portland State University fliu@cs. In our exper-iments, we found the kernel-based approaches to be effective in keeping objects intact as they are transformed. The goal is to Video Super-Resolution via Deep Draft-Ensemble Learning Renjie Liao† Xin Tao† Ruiyu Li† Ziyang Ma§ Jiaya Jia† † The Chinese University of Hong Kong § University of Chinese Academy of Sciences This is the size of an individual video frame (two fields) in the common NTSC video format used on television sets throughout North America. [19] just focused on video frame interpolation task via adaptive convolution. Long joined Adobe in June 2017. tation and scale even being of small size encode a great proportion of information A collaborative adaptive Wiener filter for multi-frame super-resolution Khaled M.

Video Frame Interpolation and Extrapolation Zibo Gong Stanford University 450 Serra Mall, Stanford zibo@stanford. . [25] proposed a deep generative model named MCNet to ex-tract the features of the last frame as content information and then encode the temporal Video frame interpolation algorithms typically estimate optical flow or its variations and then use it to guide the synthesis of an intermediate frame between two consecutive original frames. [11] developed a dual motion Generative Adversarial Network (GAN) for video prediction. Denote the interpolated frame as f˜ H, so one can write: f˜ H = Γ B (fL) (1) where ΓB is the interpolation function with bicubic kernel and is the enlargement factor. Visual Comparisons on UCF101 Figure2and Figure3show visual comparisons of single-frame interpolation results on the UCF101 dataset. To speed up the convolution process with spatially-varying kernels, a spatially-adaptive separable convolution approach has been presented for video frame interpolation [32], and obtain im- recent methods formulate frame interpolation [31,32] or extrapolation [9,42,5] as a convolution process and esti-mate the convolution using the neural networks. Mohamed * and Russell C. The eye's perception of display resolution can be affected by a number of factors – see image resolution and optical resolution. [19] consider the frame interpolation as a lo-cal convolution overthe two input frames and use a CNN to learn a spatially-adaptive convolution kernel for The paper “Video Frame Interpolation via Adaptive Separable Convolution” and its source code is available here: https://arxiv. Compared to the re-cent convolution approach that utilizes 2D kernels [36] (b), our separable convolution methods, especially the one with perceptual loss (d), incorporate 1D kernels that allow for full-frame interpolation and produce higher-quality results.

recent methods formulate frame interpolation [31,32] or extrapolation [9,42,5] as a convolution process and esti-mate the convolution using the neural networks. The A Temporally-Aware Interpolation Network for Video Frame Inpainting Ximeng Sun, Ryan Szeto, and Jason J. Both methods perform motion compensation via a dynamic local lter network, which processes the input images with dynamically generated lter kernels. Summary. 07514 (2017) Video Frame Interpolation via Adaptive Separable Convolution. Furthermore, the extended 3D adaptive NC can up-scaling the video sequence along temporal dimension, obtaining more HR frames. Tang, Y. g. edu Abstract Deep learning has shown great potential in image gen-eration, such as texture synthesis, style transfer and genera-tive adversarial model. 3 Video Frame Interpolation 对于视频插帧问题采用 adaptive convolution approach的话可以表示为如下公式: Super SloMo: High Quality Estimation of Multiple Intermediate Frames for Video Interpolation Huaizu Jiang, Deqing Sun, Varun Jampani, Ming-Hsuan Yang, Erik Learned-Miller, Jan Kautz; CVPR 2018 (splotlight) Video frame synthesis using deep voxel flow Z. 在CVPR2017那篇文章中 作者使用 一个CNN网络来估计2D 的卷积核, estimate spatially-adaptive convolution kernels for each output pixel and convolve the kernels with the input frames to generate a The main idea of this paper is 'motion-compensated frame interpolation can be implemented by a simple convolution operation'.

Video frame interpolation is a classic computer vision problem. ICCV 2017 “Lucky imaging” or “lucky exposure” is a classic interpolation-based method which takes a lot of short-exposure images and generates a sharp image by selecting and merging a few of the best images . Angelopoulou, Christos-Savvas Bouganis, Peter Y. Most of them, however, present a high computational complexity and are not suitable for real time applications. Both components New adaptive interpolation scheme for image upscaling. 25, NO. 1. usingopticalflow. One factor is the display screen's rectangular shape, which is expressed as the ratio of the physical The paper "Video Frame Interpolation via Adaptive Separable Convolution" and its source code is Roman Byshko syntes godt om dette Code available ! https://lnkd. D from Portland State University where he was a member of the Computer Graphics & Vision Lab, advised by Professor Feng Liu. 27 GB.

01692 S. edu Ziyi Yang Stanford University 450 Serra Mall, Stanford zy99@stanford. Get a constantly updating feed of breaking news, fun stories, pics, memes, and videos just for you. computing and obtaining a condition value of a critical bit-rate by an encoder based on the input video image and a change of the target allocated bit-rate; B. in/gb2udB5 Discrete Wavelet TransformsA Compendium of New Approaches and Recent ApplicationsEdited by Dr. Similarly, for the flow interpolation CNN, 7 7and 5 5 kernels are used in the first and second hierarchies, respec-tively. By default, the Dynamic Audio Normalizer uses a frame length of 500 milliseconds, which has been found to give good results with most files. Given two frames, it will make use of adaptive convolution [2] in a separable manner to interpolate the intermediate frame. For evaluation, we build Vimeo-90K, a large-scale, high-quality video dataset for low-level video processing. We proposed a novel VSR method with the name, Video Super-Resolution via Dynamic Local Filter Network, and its upgraded edition, Video Super-Resolution with Compensa-tion in Feature Extraction. LeNet [7] which has a simple structure and no attention module is used as baseline for comparison.

The resulting neural network achieves performance comparable to the original work, and was trained with a novel loss function. In the hardware previously memorized weight factors corresponding to the particular site are retrieved under the influence of the outputs of addressing circuits, multiplied with the original pixel data corresponding to the site prior to convolution and the products added together to derive the new pixel data which is subsequently Super resolving a low-resolution video, namely video super-resolution (SR), is usually handled by either single-image SR or multi-frame SR. Hardie Image Procesing Lab, Department of Electrical and Computer Engineering, University of Dayton, Dayton, OH, USA Video Super-Resolution via Deep Draft-Ensemble Learning Renjie Liao yXin Tao Ruiyu Li Ziyang Max Jiaya Jiay y The Chinese University of Hong Kong x University of Chinese Academy of Sciences The method of claim 6, wherein the improving the image quality in the enhanced second video frame comprises super resolution enhancement, dynamic range expansion, object recognition and/or detection, optical flow estimation, time-to-contact estimation, tracking in videos, video stabilization, video segmentation, frame interpolation, scene Distributed video coding for wireless video sensor networks: a review of the state-of-the-art architectures Frame interpolation model and side information A novel image enlargement method based on a modified interpolatory subdivision scheme is proposed in this study. CoRR abs/1708. video frame interpolation via adaptive convolution

transmission solenoid troubleshooting, sabhi desh ki mudra ka naam, todoroki shouto x male reader lemon, custom reptile enclosure plans, high resolution linear image sensors, event 7011 service control manager, kanboard integrations, best sub compact tractor for mowing, join freemasons online, maple wood turning blanks, xilinx opencv user guide, united sporting companies in trouble, main mumbai night panel chart, stellaris ascension paths, viper brite coil cleaner, 2001 chevy tracker oil filter, artemis p10 specs, adequan im for sale, dahua vs hikvision 4k, towns county ga mugshots, gta v anti aliasing fix, chinese wok, renshape foam, motorola droid sim card location, 241j transfer case, unity pointcloud tools, allegheny county commercial real estate, snap on tool cart prices, python download email attachment, google notebooks, springfield inmate list,